What is a DXP and how does it differ from a CMS?

A DXP manages digital experiences for humans and AI agents. What it is, how it differs from a CMS, and why architecture matters more than ever.

Key Takeaways

- Three types of visitors: People, crawlers, and now AI agents (ChatGPT, Perplexity, Gemini, Claude). LLM traffic converts up to 9x more than traditional organic traffic.

- Architecture, not features: A monolithic CMS packages content as HTML; a composable DXP exposes it as structured data through APIs, schema markup, and MCP — the native language of agents.

- GEO and AEO depend on the platform: Generative and Answer Engine Optimization are not isolated content tactics, they are consequences of architecture. A composable DXP enables them by design.

Until recently, a website had only one type of visitor: people. Search engines sent bots to index content, but the digital experience was designed exclusively for human users.

That has changed. Today, a growing fraction of the traffic that reaches your site does not come from people opening a browser, but from AI agents — Perplexity, ChatGPT, Gemini, Claude — that navigate, read, extract and cite content autonomously to answer their users’ questions. And those users, when they arrive through an AI recommendation, convert at a rate up to nine times higher than traditional organic traffic (Microsoft Clarity, 2026).

In this context, the question of which digital platform to use is no longer just a conversation about editorial autonomy or multichannel delivery. It is a conversation about architecture. And the architecture of a composable DXP is structurally better prepared for this new scenario than any monolithic CMS.

This article explains what a DXP is, how it differs from a CMS, what types exist, and why — in the era of AI agents — the choice of platform has implications that go far beyond who can publish without depending on IT.

What is a DXP (Digital Experience Platform)?

A DXP, or Digital Experience Platform, is a platform that integrates the capabilities needed to manage, personalize and deliver digital experiences coherently across multiple channels and touchpoints.

Gartner defines it as “an integrated and cohesive piece of technology designed to enable the composition, management, delivery and optimization of contextualized digital experiences across the entire customer journey.”

The key lies in that last part: across the entire journey. A CMS manages website content. A DXP manages the complete user experience, from the first visit to conversion, retention, and beyond. And in 2026, that journey includes AI-mediated interactions in which the user never visits your site directly.

The evolution from CMS to DXP

The CMS was born to solve a problem from the nineties: enabling editors to publish web content without knowing how to code. WordPress, Drupal, Joomla — all of these platforms respond to that same original need.

For two decades it worked well. Websites were mostly informational, the digital channel was essentially the desktop web, and users would arrive, read, and leave.

That model stopped being enough as soon as three things happened at once:

-

The user became multichannel. The same prospect visits the site on mobile, receives an email, sees an ad on LinkedIn and returns to the site a week later. Every touchpoint generates data; the traditional CMS ignores it.

-

Personalization went from luxury to expectation. Users expect the site to know who they are and what they’re looking for. Traditional CMSs serve the same content to everyone.

-

Digital teams grew in complexity. Marketing, communications, recruitment, admissions, student services — all need to publish and all need data. The traditional CMS was not designed for multidisciplinary teams at scale.

Now a fourth transformation is happening, deeper than the previous ones: AI agents have become a new type of web user. They are not passive crawlers like Google’s bots. They are systems that actively navigate, consume content, reason about it, and cite it in answers that will reach millions of users without those users visiting your site.

The composable DXP is the only architecture designed to respond to all four shifts simultaneously.

DXP vs CMS: the key differences

| Capability | Traditional CMS | Composable DXP |

|---|---|---|

| Content management | ✅ Complete | ✅ Complete |

| Web publishing | ✅ Single channel | ✅ Multichannel |

| Personalization | ❌ Manual or non-existent | ✅ Automated by profile |

| User analytics | ❌ Requires external integration | ✅ Integrated |

| API delivery (content as data) | ❌ HTML coupled to template | ✅ Structured JSON by default |

| Readability by AI agents | ⚠️ Depends on HTML parsing | ✅ Structured natively |

| MCP compatibility | ❌ Requires additional development | ✅ API-first by design |

| Native schema markup | ⚠️ Requires additional plugins | ✅ Generated from content fields |

| Native integrations | ⚠️ Limited (plugins) | ✅ Open ecosystem |

| Editorial scalability | ⚠️ Limited | ✅ Designed for large teams |

The difference is not just one of features. It is a difference in architectural philosophy. A CMS assumes content is published to be rendered on a screen. A composable DXP assumes content is data that can be consumed by any interface — human or automated.

Types of DXP: monolithic vs composable

Not all DXPs are alike. The most relevant distinction today is between the monolithic model and the composable model.

Monolithic DXP

It is a closed suite: everything — CMS, personalization, analytics, ecommerce, search — comes from the same vendor, integrated out of the box. The advantage is that it works from day one with little configuration. The limitation is that this integration comes at the price of rigidity: changing one piece means changing the whole. And adapting to the pace of AI change with a monolithic architecture is, in practice, very difficult.

Composable DXP (or headless)

The composable model decouples the pieces. The CMS manages content, but the presentation layer, the personalization engine, the analytics system and the integrations are independent components connected by API. This is what the industry calls MACH architecture (Microservices, API-first, Cloud-native, Headless).

The advantages are clear: you can choose the best tool for each function, update components independently, and — this is what matters most in 2026 — connect new AI capabilities without redesigning the whole.

It is estimated that by 2026, 70% of organizations will have adopted some form of composable digital experience architecture (Gartner). The headless CMS market will grow from $3.94 billion in 2025 to $22.28 billion in 2034.

Griddo is a composable DXP built on MACH architecture. The editorial team has full autonomy over the author experience; the technical team retains control over the infrastructure without managing manual updates or security patches.

The third user of your site: the AI agent

For decades, websites had two types of visitors: people and crawlers. The first sought information; the second indexed for search engines. The digital visibility strategy was designed for both.

Since 2025 there is a third type of visitor. And it doesn’t resemble either of the previous ones.

AI agents — the conversational search engines from Perplexity, ChatGPT, Gemini and Claude — don’t just index your content: they read it, interpret it, compare it with other sources, and cite it (or not) in answers that reach the end user directly. There is no click to the site. There is no GA4 session. But there is a recommendation, or there isn’t.

In July 2025, Perplexity launched Comet, a full Chromium-based browser where its agent can navigate sites autonomously, fill out forms and complete multi-step tasks. In October 2025, OpenAI launched ChatGPT Atlas, which embeds the assistant in every browser tab. The open-source Browser-Use framework, launched to allow any agent to navigate the web, reached an 89% success rate on standard web navigation benchmarks in 2025.

The data confirms the trend

Traffic referred from LLMs converts at rates far higher than traditional organic traffic:

- AI-referred traffic converts at a rate three times higher than other channels (Microsoft Clarity, 2026)

- ChatGPT-referred visits convert at 15.9%, compared to 1.76% for Google organic — a nine-fold difference (Amsive, 2026)

- In B2B specifically, LLM traffic converts four to six times more than standard organic search

- Two out of every three conversions from an LLM happen within the first seven days

- 51% of B2B buyers now start their research on an AI chatbot, up from 29% in April 2025 — a 22-point jump in twelve months (G2, 2026)

LLM traffic is still a small fraction of the total (less than 2% of referrals on most sites), but it grew 527% year over year between early 2024 and early 2025, and is projected to represent between 20% and 28% of total referral traffic by the end of 2026.

This redefines the goal of digital strategy: ranking on Google is no longer enough. You need to be the source that AI agents cite when someone asks about your category.

Why a composable DXP is better prepared for GEO and AEO

GEO (Generative Engine Optimization) and AEO (Answer Engine Optimization) are the strategic response to this new scenario. But the ability to execute them depends directly on the platform’s architecture. It is not a content strategy question; it is an infrastructure question.

A composable DXP has structural advantages over a monolithic CMS in four dimensions:

1. Content is data, not a page

In a monolithic CMS, content lives inside HTML templates. The text of an article, its metadata, its sections, its images — everything is mixed into an HTML blob that must be parsed to extract the real information.

In a composable DXP, content is stored as structured fields: title, body, author, published_date, faq_items, category. When an AI agent queries it, it receives clean and semantically rich JSON, not HTML surrounded by navigation menus, footers and ad banners.

AI systems operate on data. A composable DXP speaks their language natively.

2. Schema markup generated from fields, without plugins

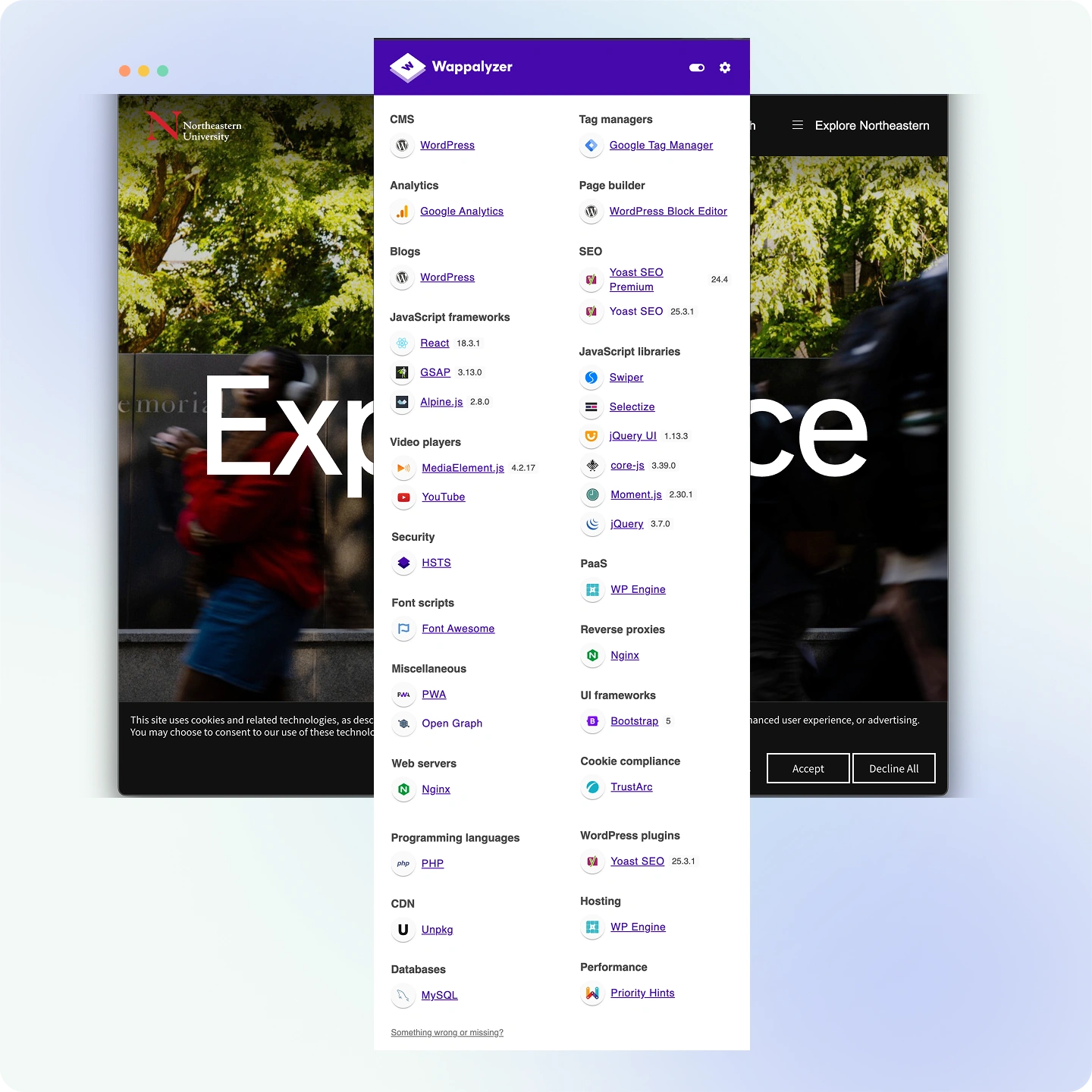

Schema markup (JSON-LD) — the structured data layer that Google, Bing and AI engines use to understand the type and context of content — in a monolithic CMS like WordPress requires additional plugins (Yoast, RankMath) that generate markup from unstructured content. The result is frequently incomplete or out of date.

In a composable DXP, the content fields are already structured. Generating Article, FAQPage, Course, Event or Organization schema is simply mapping existing fields to an output format — a trivial operation that can be automated and kept up to date in real time.

This matters because LLMs use schema markup during the indexing phase (via Google/Bing) to classify the type and authority of the content they will cite.

3. MCP: your DXP as a native tool for AI agents

The Model Context Protocol (MCP) is the open standard published by Anthropic in November 2024 to enable AI agents to connect directly with external tools, APIs and databases. In April 2026 the official registry exceeds 9,400 MCP servers. OpenAI, Google DeepMind and Microsoft have officially adopted it.

What MCP does is simple but transformative: it allows an AI agent to call your platform’s API directly to retrieve content, instead of having to crawl and parse your website.

A composable DXP with API-first architecture can expose an MCP server over its Content Delivery API with minimal effort. The result is that when a user asks Claude, ChatGPT or Gemini something related to your area of expertise, the agent can query your platform directly, retrieve the most up-to-date answer and cite it with precise context — without depending on a crawler having recently visited your site.

A monolithic CMS can implement MCP, but doing so means building an API layer on top of an architecture that was not designed to be exposed as an API. In a composable DXP, that layer already exists.

4. llms.txt: the roadmap for agents

In 2024, Jeremy Howard (Answer.AI) proposed the llms.txt protocol: a Markdown file in the site’s root directory that acts as a navigation map for LLMs — indicating which pages are most important, how the site’s knowledge is organized, and how to access it efficiently.

More than 844,000 sites have implemented it, including Anthropic, Cloudflare, Stripe and Vercel. Adoption grew more than 500% year over year. Google included it in its Agent2Agent (A2A) protocol in April 2025.

In a monolithic CMS, generating an accurate and up-to-date llms.txt requires a manual inventory of published content. In a composable DXP, that inventory already exists in the CMS API: generating and maintaining llms.txt is a task that can be fully automated with a simple integration over the Content API.

The components of a modern DXP

A composable DXP is built on these layers:

1. Content management core (headless CMS) The editorial engine. Stores and structures content independently of how it will be presented. Editors work here; the channels — websites, apps, AI agents — consume the content via API.

2. Presentation layer The interface the end user sees. In a composable DXP it can be a website, a native app, an intranet portal or any interface that consumes the CMS API — including an AI agent’s response.

3. Personalization engine Connects user profiles with content. Determines what each user sees based on who they are, what they have done before and where they are in their journey.

4. Data and audience management Centralizes user data from multiple sources (web, CRM, forms, prior interactions) to build unified profiles.

5. Analytics and intelligence Measures user behavior, content performance and campaign impact. In a mature DXP, this layer includes visibility into citations in generative engines, not just direct web traffic.

6. Integrations and API exposure layer Connects with the external ecosystem: CRM, email platforms, recruitment systems, conversion tools. And, increasingly, exposes content as a source consumable by AI agents via MCP or open APIs.

When do you need a DXP? The key signals

A CMS is sufficient if you have an informational website, a small editorial team and a single digital channel. In that context, the complexity of a DXP is not justified.

A DXP starts to make sense when some of these situations appear:

- You manage multiple sites or subdomains with different editorial teams that need both brand coherence and local autonomy

- Personalization is part of the business, not an extra: different audiences need to see different content based on their profile, language or funnel stage

- You have user data you are not using because it lives in silos disconnected from each other

- The marketing team can’t publish without going through IT for every change

- You operate across multiple channels — web, app, email, internal portals — and manage content for each one separately, with the duplicated work that implies

- Visibility in generative engines is starting to matter and your current architecture has no way to optimize for it systematically

- Security and uptime are non-negotiable priorities and you need a platform that handles patches without depending on your internal technical team

If you recognize three or more of these situations, the DXP conversation is justified.

DXP for universities: a particular case

Universities bring together, in a single institution, almost all the scenarios that make a DXP necessary.

They manage dozens of sites: the corporate website, the websites of each faculty, the admissions portals, the postgraduate sites, the student intranet, the campaign microsites. They have multiple editorial teams — marketing, communications, registrar, dean’s offices — with different needs and rhythms. They serve audiences with very different profiles: prospective students, current students, alumni, faculty, partner companies.

And they do all of this, frequently, on a traditional CMS that was never designed for that scale.

The AEO dimension adds an extra layer of urgency. When a prospective student asks Perplexity “which is the best university to study design in Madrid?” or asks ChatGPT “what is the difference between IE’s master’s in digital management and ESADE’s?”, the answer does not depend on the university’s SEO ranking. It depends on whether that institution’s content is structured, accessible and cited enough for the agent to include it in its response.

A composable DXP allows universities to manage their content as structured data — academic programs, admission requirements, faculty profiles, alumni success stories — in a format that AI agents can consume and cite directly. A monolithic CMS buries that content in HTML templates that agents have to decompose.

IE University and ESADE already operate on Griddo DXP. At IE University, the migration to a MACH architecture reduced the time engineering spent on platform maintenance from 40% to 5% — freeing capacity for higher-value strategic projects. Campaign landing pages went from taking weeks to publishing in minutes.

Conclusion

A composable DXP is not a more expensive version of the CMS. It is an architectural response to a different reality: that of organizations managing multiple channels, multiple audiences, multiple teams — and now also multiple types of content consumers, including AI agents.

The web has always had two types of visitors: people and crawlers. Today it has three. The AI agents that power Perplexity, ChatGPT, Gemini and Claude don’t just index: they reason, cite and recommend. And they do so with a conversion profile that traditional organic traffic has never matched.

Being prepared for that third visitor is not a content tactic question. It is an architecture question. A composable DXP — with its content structured as data, its API delivery, its ability to expose MCP servers and its native compatibility with schema markup — is designed for this scenario. A monolithic CMS can adapt, but doing so requires layers of additional work that accumulate and become more costly over time.

Want to see how Griddo DXP works in a real university? Request a demo and we’ll show you the full ecosystem in 30 minutes.

Related articles

- GEO: the future of digital positioning — What Generative Engine Optimization is and what it implies for university digital strategy.

- MACH architecture for university CIOs — The architectural model broken down in detail for technical teams.

- The vendor lock-in myth vs the version lock-in reality — The costs of not migrating that almost no one quantifies.

- “Free” is expensive: the hidden costs of open source — The real TCO of an open source CMS compared to a managed platform.

- The university web ecosystem: building a cohesive vision — What managing multiple sites looks like from a DXP.